Master class how to ai prompt with image generate techniques for midjourney dall e and flux

Table of Contents

As of May 2026, this master class on how to ai prompt with image generate techniques for midjourney dall e and flux reveals that success lies in model-specific logic: use descriptive natural language for Flux Pro 1.1 and GPT-Image-1, while applying structured parameters and Style References for Midjourney v8.1. Leverage “Image-to-Prompt” reverse engineering and cinematic directives for professional-grade results.

The 2026 Prompting Logic Matrix: Midjourney v8.1 vs. GPT-Image-1 vs. Flux

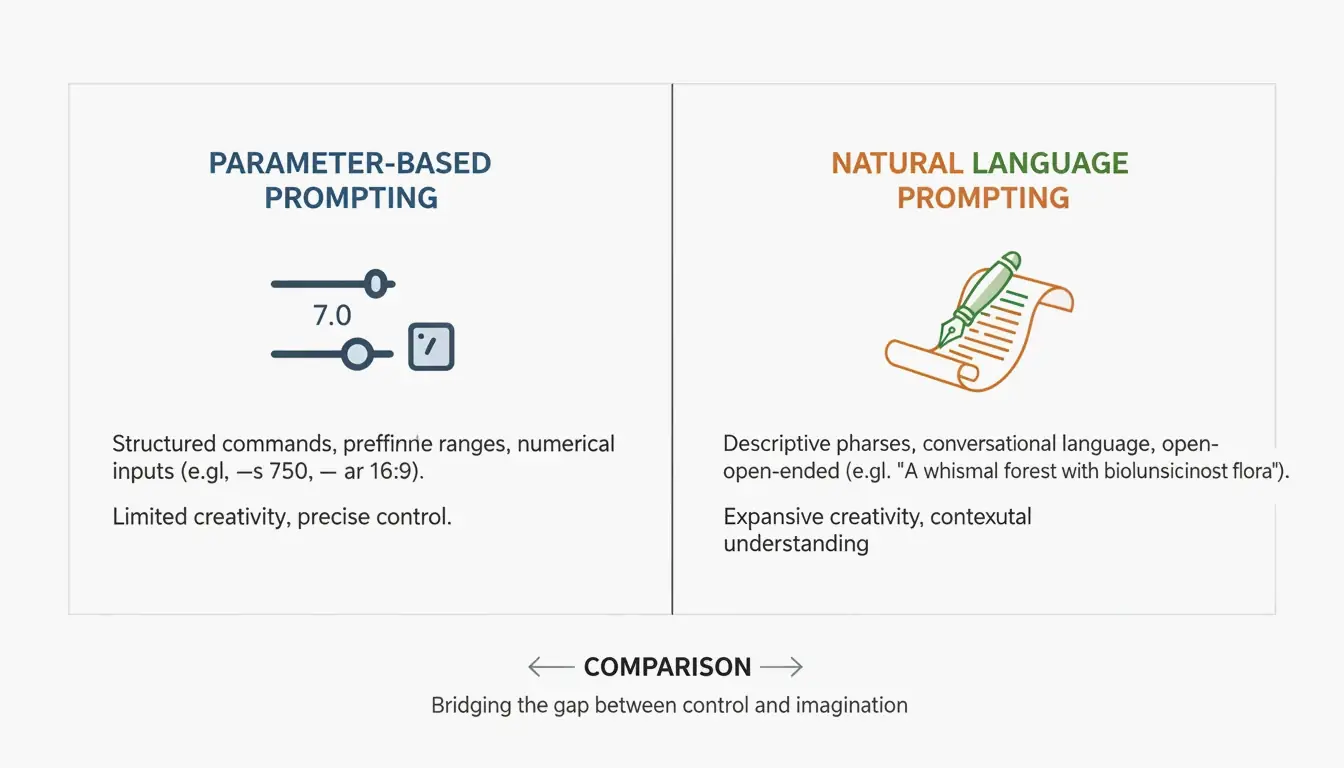

The world of generative AI has moved past simple “keyword stuffing.” In 2026, professional creators have traded random strings of artist names for “intent-based” prompting, where the syntax matches the specific architecture of the model. According to NovaKit, API pricing has plummeted 25-40x since 2024. This shift has made high-volume testing affordable, allowing creators to iterate until they hit perfection.

Midjourney v8.1 is still the go-to for structural control. If you want to maintain a specific look, commands like --ar (aspect ratio) and --sref (Style Reference) are your best friends. On the other hand, GPT-Image-1 and Flux Pro 1.1 Ultra work more like a “Director’s Script.” These models are great at following long, natural descriptions. They excel at complex scenes where things need to be in exact spots—like “a red car on the left and a blue car on the right.”

As David Holz, founder of Midjourney, explains, artists use these tools to “rapid prototype” concepts for clients before diving into manual work. The goal in 2026 isn’t to guess what the AI will do, but to treat prompting as a precise engineering discipline.

Framework: The Three-Layer Prompting Structure (Subject, Environment, Technicals)

To get consistent results no matter which model you use, try this modular three-layer framework:

- The Subject: Be specific. Instead of “a pot,” try “a weathered copper kettle.”

- The Environment: Define the vibe. Think about lighting and background, like “harsh midday sun in a high-desert landscape.”

- The Technicals: This is where you talk to the specific model. For Midjourney, use parameters like

--stylize. For Flux, give it camera specs like “shot on 35mm lens, f/1.8.”

How to Master Midjourney v8.1: Style References and Aesthetic Control

Midjourney v8.1, released in April 2026, is still the favorite for anyone focused on aesthetics. The real secret for brand consistency is the --sref (Style Reference) tag. By adding a URL to an existing image after this tag, you can force the AI to copy the colors, textures, and overall “vibe” of that reference. This builds on the artistic potential seen in early milestones like Théâtre D’opéra Spatial, which first proved Midjourney could compete in the fine arts.

By 2026, the --personalize code has become a standard part of the workflow. It helps the model learn your personal style over time. If you’re going for photorealism, skip vague words like “ultra-realistic” and use “Lens-Specific” prompts instead. Tell the AI exactly what gear to “use,” such as a “35mm f/1.8” for a blurry background or a “14mm wide-angle” for big architectural shots.

Why Flux Pro 1.1 Ultra is the New Standard for Precision and ControlNet?

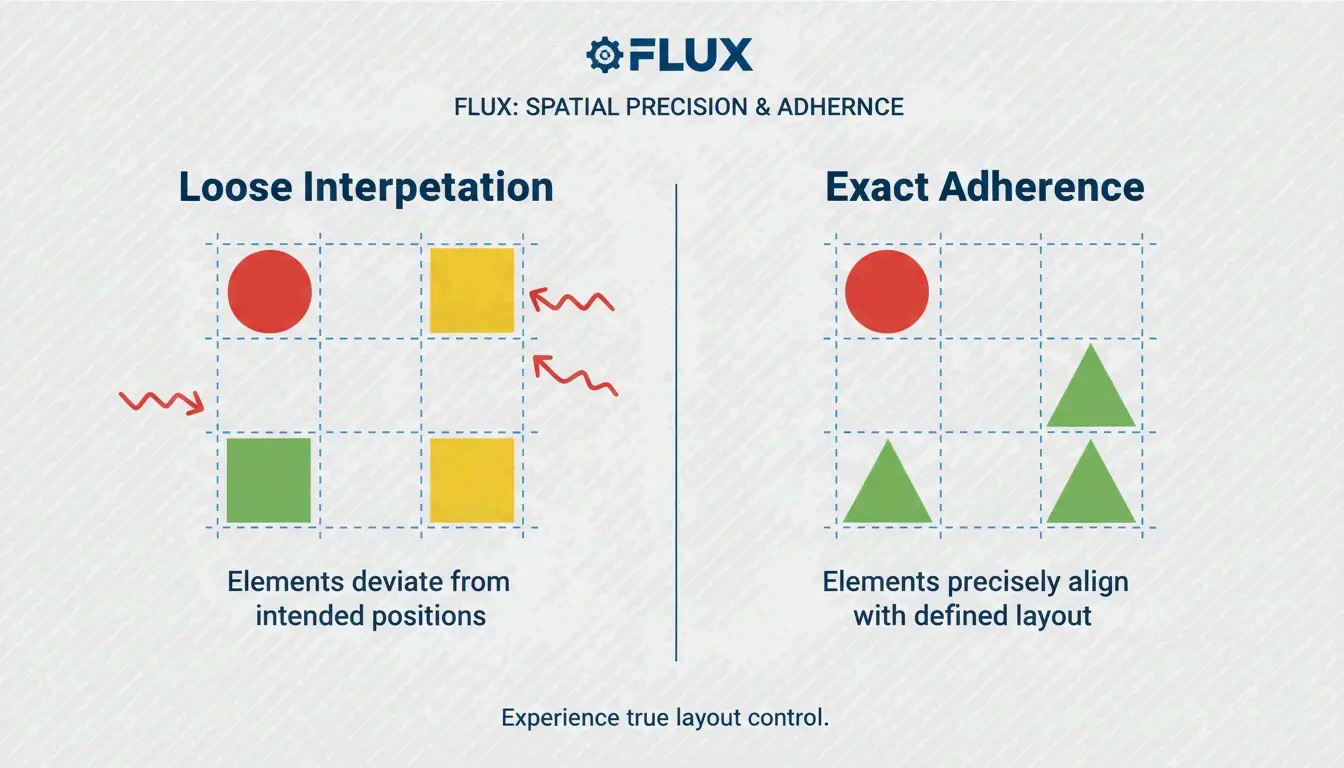

Flux Pro 1.1 Ultra has become a developer favorite because it works so well with ControlNet tools. While Midjourney “interprets” what you say, Flux “adheres” to it. Using ControlNet lets you lock in exact poses, depth maps, and layouts. This ensures your subject stays exactly where you put them in the frame.

Flux also beats GPT-Image-1 when it comes to professional editing tasks like inpainting (fixing parts of an image) and outpainting (expanding an image). Data from NovaKit shows that Flux Pro 1.1 Ultra has the highest “Prompt Adherence” score in the industry for complex scenes. If your project has a strict layout that can’t be left to the AI’s imagination, use Flux.

Commercial Photography: Integrating Imagen 4 for Product Renders

For clean, commercial product shots, Google’s Imagen 4 is often the best pick. It’s particularly good at high-end lighting and avoiding those weird “AI artifacts” on shiny surfaces. NovaKit reports that Imagen 4 delivers the cleanest product images for about $0.03 to $0.12 each, making it a very cost-effective choice for e-commerce catalogs.

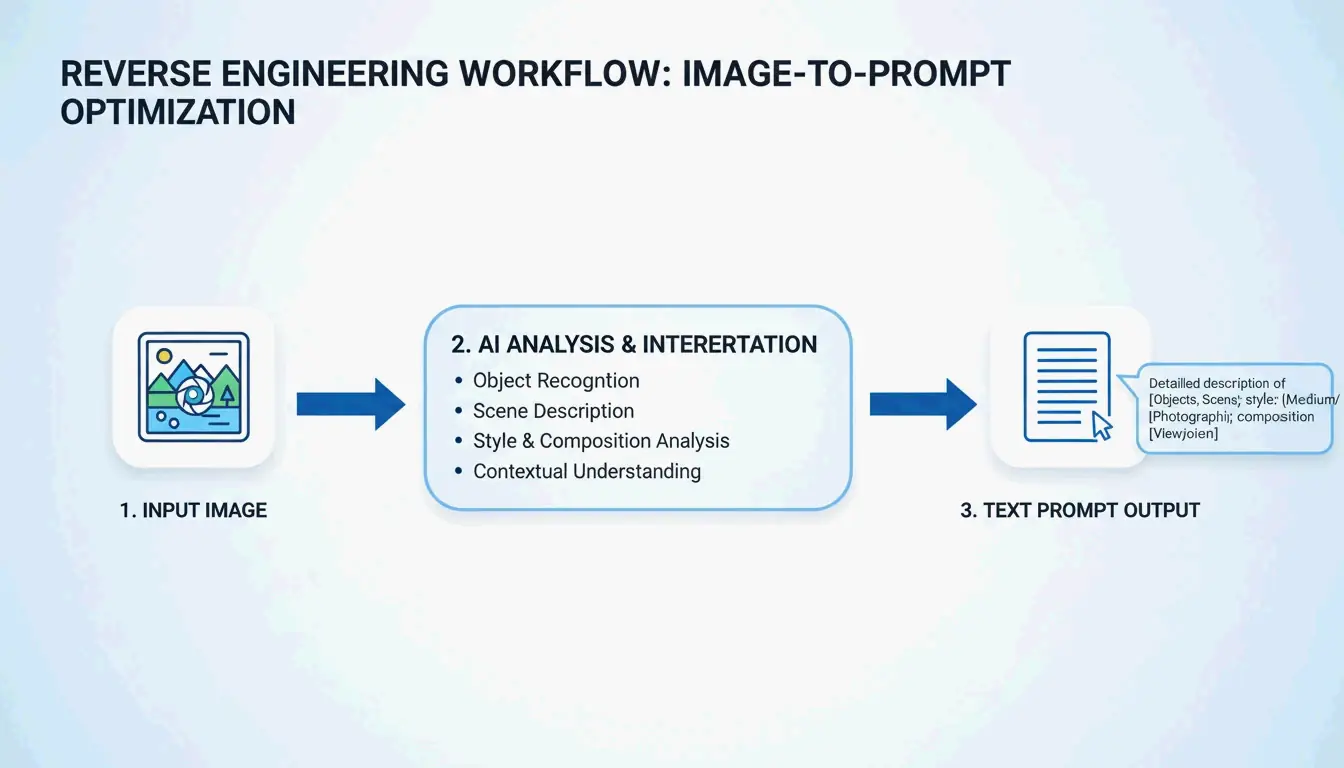

Can You Reverse Engineer Art? Mastering Image-to-Prompt Techniques

In 2026, you don’t always have to start with a blank text box. Tools like PixelPanda let you upload a photo, painting, or screenshot and get four optimized prompts back (General, Flux, Midjourney, and Stable Diffusion).

This “Image-to-Prompt” method is a great way to move between different models. For example, you could take a beautiful render from Midjourney, reverse-engineer the prompt using PixelPanda, and then use that description in Flux Pro 1.1 to get more control over the structure. You can also head over to PromptBase to study the “DNA” of successful prompts and see which keywords are currently moving the needle.

Professional Automation: Scaling Image Generation with MCP Servers and APIs

For big projects, manual prompting is being replaced by automated workflows using the Model Context Protocol (MCP). By setting up an MCP server, developers can let AI agents like Claude or GPT-4 handle the image generation themselves. According to SamurAIGPT, this creates a “Prompt-Generate-Review” loop where the AI manages the whole creative process.

Cost is a big factor here, too. NovaKit notes that a GPT-Image-1 HD render now costs around $0.17. By using bulk generation through the muapi CLI, teams can create hundreds of marketing variations for a fraction of what they’d spend on stock photos or manual design.

Conclusion

Prompting in 2026 is no longer a guessing game; it’s a precise skill. The key to professional results is knowing the architectural differences between Midjourney, GPT, and Flux. The days of shouting random keywords at an AI are over, replaced by a world of structured parameters, style references, and automated workflows.

Action Plan:

- Define your goal: Use Midjourney v8.1 for artistic projects and “beautiful by default” images.

- Prioritize precision: Use Flux Pro 1.1 Ultra when you need total control over poses or where things are in the frame.

- Target text: Use GPT-Image-1 for graphics that need readable text or UI mockups.

- Scale: Look into MCP servers and the muapi CLI to automate your work and save money.

FAQ

How do I achieve consistent character rendering across multiple images in 2026?

To maintain character consistency, use Midjourney v8.1’s --cref (Character Reference) tag followed by the URL of your base character image. In Flux, the professional standard is utilizing LoRA (Low-Rank Adaptation) weights specifically trained on your character. Additionally, maintaining consistent ‘Seed’ numbers and detailed physical descriptors helps ensure the AI doesn’t drift between generations.

Which AI model currently offers the best integrated text rendering for UI mockups?

As of May 2026, GPT-Image-1 is the industry leader for precise text-in-image rendering, successfully handling signs, labels, and UI elements. Flux Pro 1.1 Ultra is a close second, offering excellent font control through descriptive prompts. While Midjourney v8.1 has significantly improved its text capabilities, it still prioritizes artistic “vibe” and may occasionally struggle with literal character accuracy in complex strings.

Is it possible to generate AI images without using Discord for Midjourney v8.1?

Yes. By May 2026, the Midjourney Web Alpha is fully public, allowing all users to generate and edit images directly through a browser interface. Furthermore, professional users can leverage the official Midjourney API or third-party wrappers like muapi to integrate Midjourney generation into Discord-free, agentic workflows and custom applications.

About the Author

Indie Hacker & DeveloperI'm an indie hacker building iOS and web applications, with a focus on creating practical SaaS products. I specialize in AI SEO, constantly exploring how intelligent technologies can drive sustainable growth and efficiency.

Last reviewed May 7, 2026. This article is reviewed for accuracy and updated when tooling or platform behavior changes.